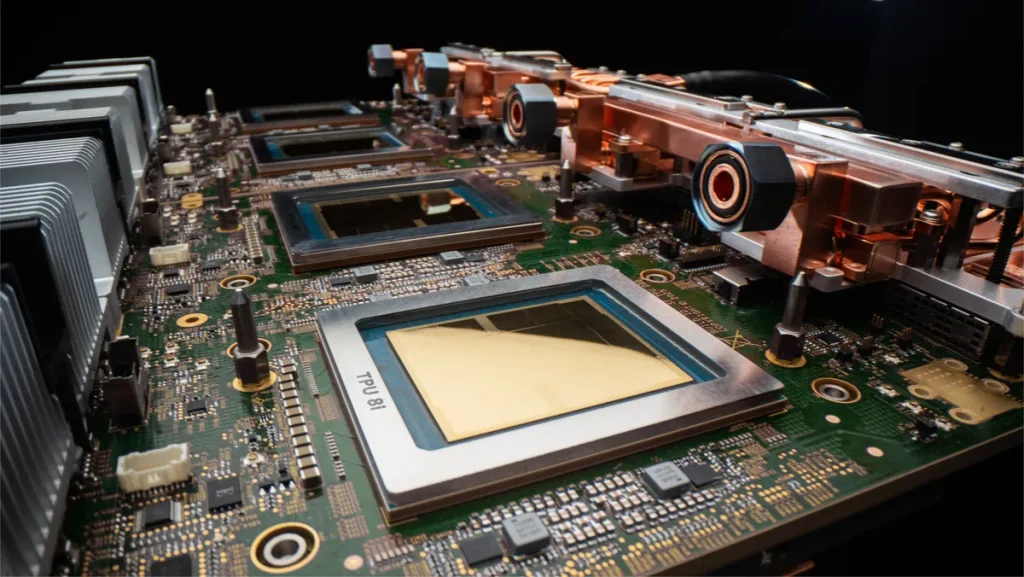

Google has recently announced the launch of its eighth generation custom tensor processing unit. TPU 8t and TPU 8i. Google says the new chips have an architecture designed to handle the growing demands of frontier model development and agentic workloads.

According to Google, the new chips represent a fundamental split in its hardware strategy, offering a training-optimized processor in the TPU 8t and a low-latency inference specialist in the TPU 8i. Both are powered by Google’s custom Xeon Arm-based CPUs, marking the first time that the company has fully integrated its own silicon across an entire system host to eliminate performance bottlenecks.

On paper, the TPU 8T is engineered to accelerate the Frontier model development cycle by providing approximately three times more compute performance per pod than its predecessor. A single TPU 8T SuperPod now reaches 9,600 chips, providing 121 exaflops of compute and two petabytes of shared high-bandwidth memory. Google has also integrated the Virgo Network Fabric and 10x faster storage access via TPUDirect, allowing nearly linear scaling up to one million chips in a single logical cluster for model training.

Meanwhile, the TPU 8i addresses the specific challenges of “agent” AI, where multiple distinct models must interact with minimal lag. It features 384 MB of on-chip SRAM (three times the capacity of its predecessor) to house active working sets entirely on-chip. The architecture doubles interconnect bandwidth to 19.2 Tb/s and features boardfly topology, which reduces network diameter by more than 50%.

Both chips are co-designed with Google’s Gemini model in mind and are open to the broader developer ecosystem, including native support for JAX, PyTorch and vLLM, as well as bare-metal access to give customers direct hardware control.