Kelvin Wankhede/Android Authority

TL;DR

- ChatGPT is getting a new “Trusted Contacts” feature.

- This allows adults to add a trusted contact that ChatGPT can alert for assistance in moments of crisis.

- OpenAI is also using human reviewers to determine whether interactions indicate security concerns.

ChatGPT is one of the most popular AI chatbots. Users often rely on ChatGPT to chat about everything, including suicide. In fact, ChatGPT has been involved in several self-harm cases, and OpenAI has even been sued over such incidents. now chatgpt Introduction A new “Trusted Contacts” feature to help users get access to real-world support in such situations.

Don’t want to miss the best of Android Authority?

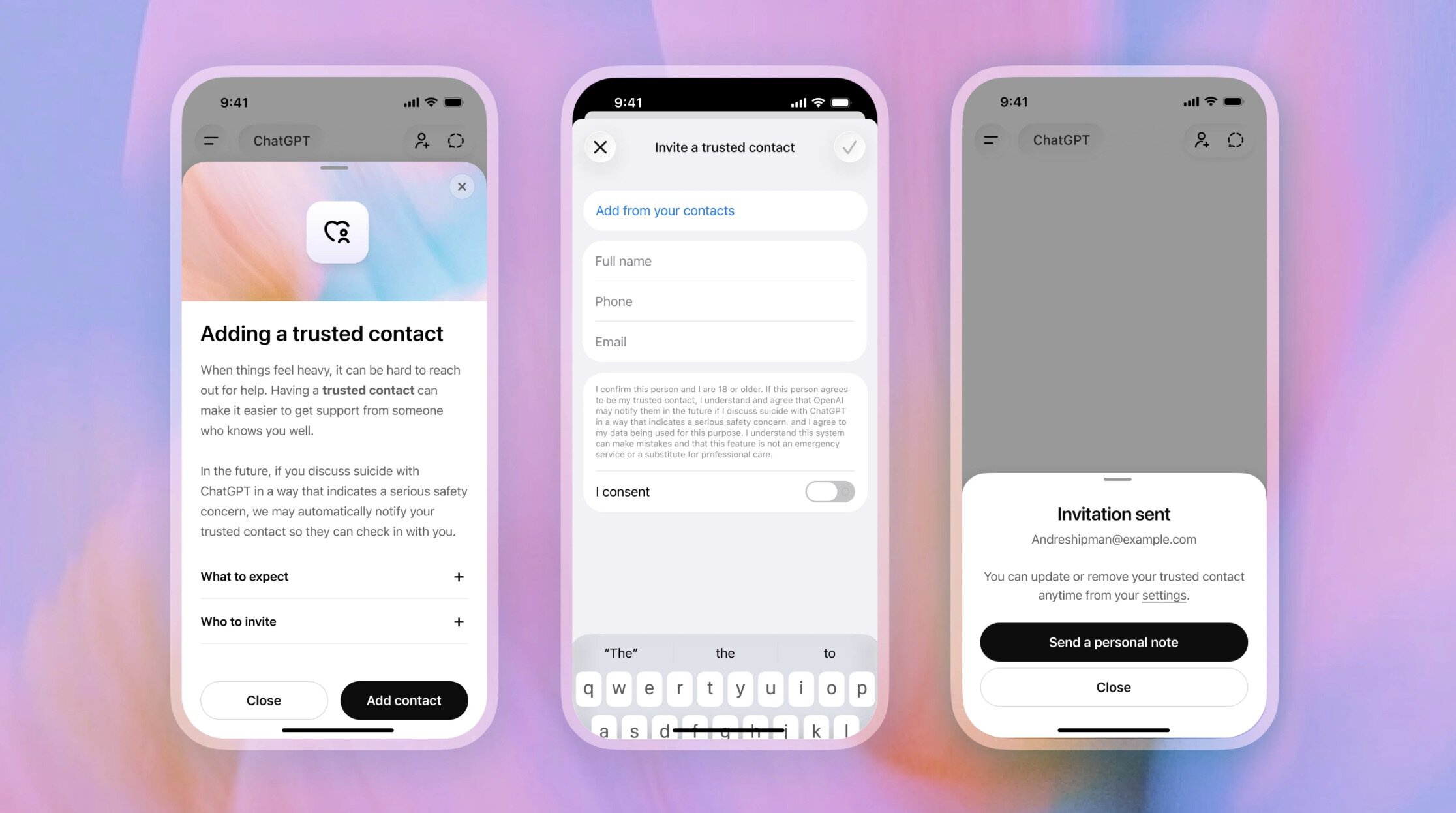

Interested adult users of ChatGPT can add a trusted adult contact (18+ globally or 19+ in South Korea) to their ChatGPT settings. The added contact will also receive an invitation and can choose to accept or decline it.

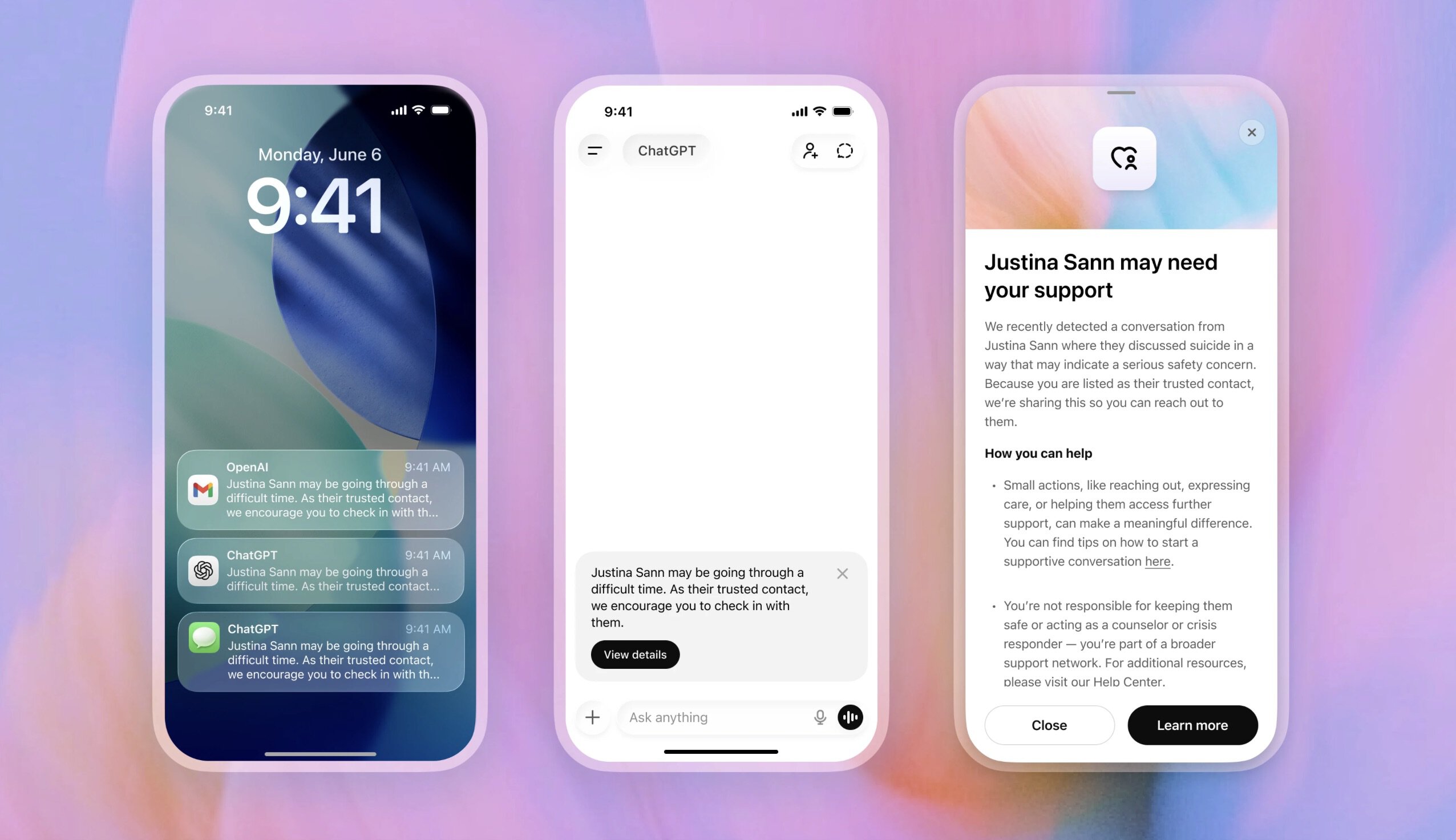

Once the feature is set up, the user does not need to do anything else. During their conversation, if ChatGPT detects that they are discussing suicide, it will tell the user that their trusted contact may be notified.

However, OpenAI does not rely solely on automated monitoring systems to detect this. A team of specially trained human reviewers will also review the conversations. This team is responsible for determining whether an interaction indicates a security concern, and making the final decision on whether the trusted contact needs to be notified.

This feature is optional, and OpenAI states that even when used, the trusted contact will not receive a transcript of users’ chats. This is to ensure confidentiality. The trusted contact will simply receive a notification encouraging them to check in with the user.

This new feature adds to ChatGPT’s existing security measures for sensitive conversations, which include encouraging people to contact the helpline and even take a break from using ChatGPT.

Thank you for being a part of our community. Please read our comment policy before posting.